Computer science professor will use the award to help address the work of day-to-day software developers and how they consider ethics when they create a machine learning system

By Bryan Hay

Justin Smith, assistant professor of computer science, has received a $60,000 award from the Google Research Scholar Program to support research focusing on understanding how to make machine learning systems more ethical.

“Everyone is aware that machine learning is becoming much more common in society. And machine learning software is increasingly making automated decisions that really impact people’s lives,” Smith says. “These automated decisions made by machine learning algorithms can often be really detrimental. We want to understand how we can make these systems more ethical.”

Working with co-principal investigator Brittany Johnson, assistant professor of computer science at George Mason University, Smith will use the Google grant to help address the work of day-to-day software developers and how they consider ethics when they create a machine learning system.

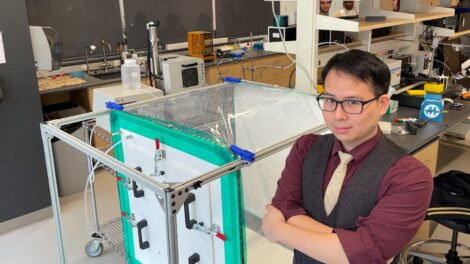

Justin Smith, assistant professor of computer science

“There hasn’t been much research that looks at the perspective of the people who are actually creating the systems and writing the code line by line that implements machine learning into software,” he says. “How can we better support them? That’s really the big gap in the literature that this work is trying to address.”

Computer science ethics are wide ranging, affecting everything from political polls to product advertising. Criminal justice systems use software to predict whether a person will commit a crime and often factor those results into bail and sentencing decisions, Smith says, adding that algorithms need to be written to prevent racial bias.

“A common issue is that there is racial bias in terms of how the system will look at two people who are similar by all measures, but have a different race,” he says. “And one of them will get a really long prison sentence and the other one will get a really short prison sentence. How do we create systems that are fair in those regards?”

To better understand the role ethics is playing in data-driven, or machine learning, software development and how to support ethical practices, Smith and Johnson will investigate how developers discuss ethics, examine ethical signals, such as terminology and tools, that may correlate with ethical considerations in machine learning software and look for ways to encourage and support ethical considerations in machine learning software development.

“Our preliminary exploration found that while ethical signals do exist, they are often weak or located in places that lack visibility, such as the source code,” Smith and Johnson noted in their grant proposal. “One solution we plan to investigate is the ability to provide frameworks that make it easier to talk about or consider ethics in online communities.”

Smith says that grants from the Google Research Scholar Program are extremely competitive and usually go to faculty at large research institutions.

“The fact that our computer science department has created a supportive environment to allow me to compete against schools like MIT and CMU, that’s a mark of approval of what we are doing at Lafayette,” he says. “Google is interested in the types of research we’re doing here, and the grant also supports our students who are assisting in the research.”